AI Data Security

AI data security is essential for startups because modern AI systems handle dynamic data, models, and user interactions that can easily expose sensitive information if not properly protected. Key practices include minimizing and sanitizing data before training, encrypting model weights, securing RAG pipelines, and using guardrails like prompt filtering and output monitoring to prevent leaks or attacks. By building security into the AI lifecycle early, startups can protect intellectual property, meet compliance requirements, and gain the trust needed to scale and win enterprise clients.

How to Ensure AI Data Security for Startups

Building an AI-driven startup feels like an exhilarating, high-speed race. However, in the rush to ship features, many founders accidentally accumulate "security debt" that can sink the ship before it ever hits a Series B. We’ve moved past the days of just protecting static rows in a SQL database. Today, we are using AI data security to defend dynamic models that learn and remember. If left unguarded, they might accidentally whisper your most sensitive trade secrets to a competitor through a simple chat box.

Securing your AI data isn't just a "nice-to-have" task for a rainy day. It is the bridge between being a "scrappy project" and becoming a mature enterprise solution. If you want to land that Fortune 500 contract or survive a brutal due diligence phase, you need a strategy that treats security as a core organ of your AI’s architecture, not just a fence you build around it later.

Key Takeaways

- Clean Your Data Early: Mask sensitive PII before it ever touches a training set or vector store. This helps your model learn useful concepts without memorizing private identities.

- Guard the "Brain" Itself: Encrypt and sign your model weights as Tier-1 assets. This stops hackers from stealing your intellectual property or tampering with your AI’s logic.

- Lock Down the RAG Pipeline: Keep your vector databases in private networks with per-tenant encryption. These layers ensure the AI doesn't accidentally grab data from the wrong customer account.

- Set Up Smart Guardrails: Use real-time filters to catch prompt injections and accidental data leaks. This is your final safety net to ensure secrets don't slip out in a model's response.

What Is AI Data Security?

Think of AI Data Security as the specialized craft of protecting an AI system's entire life cycle. This covers everything: the raw training data, the vector databases used for Retrieval-Augmented Generation (RAG), the model weights (your IP), and the actual prompts and responses.

Standard security usually focuses on locking files. In contrast, AI security is about managing what a model "knows" and making sure it doesn't leak that knowledge to the wrong person.

Why Must Your Startup Prioritize AI Security Right Now?

The AI "Gold Rush" has created a strange paradox: the same tech that accelerates your growth is often your biggest liability. In 2026, this isn't a niche IT issue; it’s the foundation of your company’s survival.

- Protecting Your Competitive Moat: For most of us, the "secret sauce" is the unique data used to tune the model. If those weights or datasets are leaked, a competitor can clone your entire product’s intelligence overnight.

- The "Unlearning" Nightmare: New laws like the EU AI Act have introduced the "Right to be Forgotten" for AI. If you train on private data without filters, you might be legally forced to delete the entire model.

- Stopping Agentic Hijacking: As we move toward "Agentic AI"—models that can move money or send emails—security is about operational safety. One clever, malicious prompt could trick your agent into dumping your entire customer list.

- Winning Enterprise Trust: Big corporate clients won't touch your tool unless you can prove their data won't "leak" into your general model.

- Fighting Machine-Speed Attacks: Hackers are using AI to find bugs in your code faster than any human could. You need automated, behavior-based defenses just to keep up.

How Can You Redefine Your Perimeter Through Data Minimization?

The safest data is the stuff you never gave the model in the first place. It's tempting to "feed the beast" everything to get better results, but that creates a permanent liability.

- The Sanitization Pipeline: Before data hits a training set, run it through a scrub layer. Use tools to mask names, addresses, and SSNs.

- Deterministic Redaction: Instead of just deleting info, swap it with a placeholder (e.g., [CUSTOMER_ID_REDACTED]). The model still understands context without seeing sensitive values.

- Differential Privacy: If you're handling user analytics, try adding mathematical "noise" to the dataset. This lets the AI learn trends without identifying individuals.

How Will You Protect the "Brain" and Your Model Repositories?

Losing a customer list is bad, but losing your model weights is a total disaster. It’s the blueprint of your entire business.

- Cryptographic Signing: Sign every model version before it goes live. This prevents "poisoning" attacks where someone swaps your model for a malicious version.

- Encrypted Storage: Treat model files (like .bin or .safetensors) as Tier-1 secrets. Use Customer-Managed Keys (CMK) so that even if your cloud provider is breached, your model stays unreadable.

- Just-In-Time (JIT) Access: Data scientists often have "god-mode" access, which is a huge risk. Use JIT access so that high-level permissions only exist for the hour they’re actually training.

How Should Your Engineers Secure the RAG Fortress?

Most of us use RAG to give our AI access to private company docs. However, this creates a massive shortcut for hackers, where the AI accidentally acts as a "proxy" to bypass traditional database permissions.

The Golden Rule: Your AI should only have read-only access to the specific tables it needs. Never give your AI agent "Superuser" credentials.

- Isolate Your Vectors: Keep your vector store (Pinecone, Milvus, etc.) inside a Private VPC. It should never be visible to the public internet.

- Per-Tenant Keys: If you’re a B2B company, use a different encryption key for every customer. If the AI "hallucinates," it simply won't be able to decrypt information from the wrong account.

How Can You Defend the "Front Gate" of Prompts?

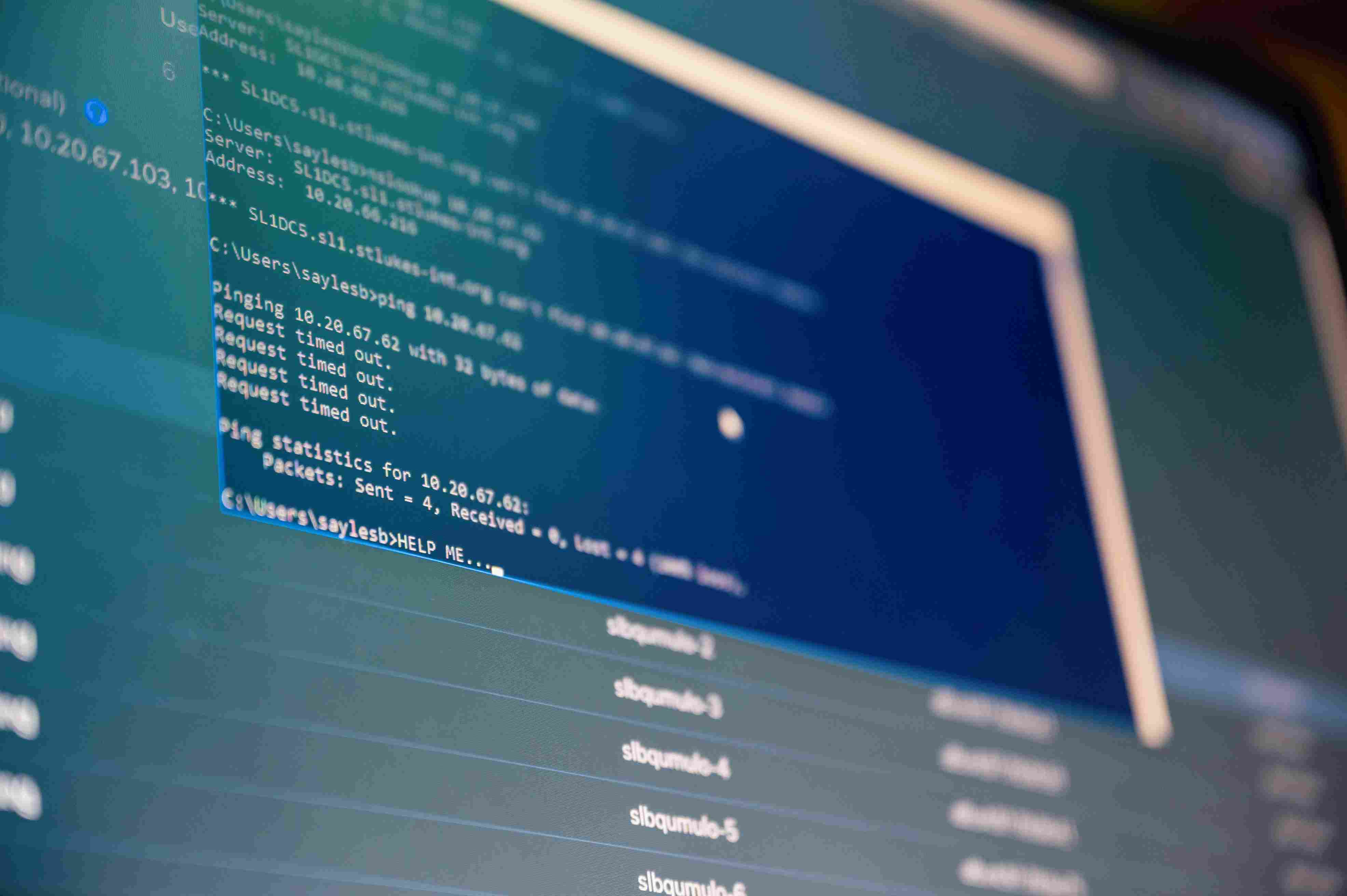

In the world of LLMs, a "prompt" is basically a piece of code. Prompt Injection—tricking an AI into ignoring its rules—is the new SQL injection.

- Security LLM Layer: Put a smaller, faster model in front of your main one. Its only job is to check prompts for malicious intent before they reach your main infrastructure.

- The Output Filter: This is your final safety net. Scan the AI’s response before the user sees it. If it tries to spit out an API key or a credit card number, the filter catches and redacts it.

- Citations are Mandatory: Force your AI to cite its sources. This makes auditing a breeze; if the model leaks something it shouldn't, you can trace it back to the exact source file.

How Compliance Can Be a Growth Engine for Your Team?

With the EU AI Act in full effect, security is a competitive advantage. Startups that build this in early can scale much faster because they aren't constantly fixing old mistakes.

- Immutable Logs: Keep a permanent record of every prompt, retrieval, and output. You’ll need this for forensics and for passing SOC 2 or ISO 42001 audits.

- AISPM Tools: Use AI Security Posture Management to find "Shadow AI." You'd be surprised how many employees are uploading sensitive code to unapproved tools.

- Transparency Reports: Be honest with your users. Tell them exactly how their data is used. Today, trust is more valuable than your GPU cluster.

How Can Sentant Help Your Startup Master AI Data Security?

At Sentant, we understand that for a high-growth startup, security can’t be a "roadblock"—it has to be an accelerator. We specialize in helping AI founders build the "Next-Gen" security foundations required to win enterprise trust without dragging down your engineering velocity.

Here is how we partner with you:

- Fractional CISO & AI Governance: Most startups don't need a full-time CISO yet, but they do need expert guidance. We provide fractional security leadership to help you navigate the EU AI Act, build your AI risk register, and ensure your roadmap meets the highest compliance standards.

- Architecting the "Zero-Trust" AI Pipeline: We don't just give you a list of problems; we help your engineers implement them. From moving your vector databases into Private VPCs to setting up per-tenant encryption and hardware-bound access for your research teams, we build the "fortress" around your RAG pipeline.

- Automated Guardrail Implementation: Our team helps you deploy and tune the specialized "Security LLM" layers and output filters mentioned in this guide. We ensure that your front-gate defense is robust enough to stop prompt injections while remaining fast enough to maintain a seamless user experience.

- Shadow AI Discovery & Control: We help you gain immediate visibility into how your team is using AI. By deploying modern monitoring tools, we identify where sensitive company data might be leaking into unsanctioned third-party models and help you redirect that energy into your secure, approved internal tools.

- SOC 2 & Enterprise Readiness: When that Fortune 500 prospect hands you a 200-question security assessment, we sit in the foxhole with you. We help you document your AI data lineage and access controls so you can answer every question with confidence and close the deal faster.

By making AI data security a feature rather than a chore, you won't just stay out of the headlines—you'll build the kind of mature organization that enterprises are actually willing to pay for.

How is your team currently handling the data "handshake" between your users and your models?

Frequently Asked Questions

If we use a third-party LLM (like OpenAI), isn't our data already safe?

Not necessarily. Even with "zero-retention" enterprise modes, you are responsible for what you send. If you don't redact data on your end, it still crosses the wire and could be logged in ways that break your compliance promises.

How do we stop "Prompt Injection" from leaking our internal secrets?

You need a multi-layered defense. Use a "Security LLM" to screen inputs and strict output filters to catch the AI if it tries to spill the beans. Also, make sure your RAG system can only "see" documents that the specific user is already allowed to access.

Can someone "reverse-engineer" my model to see the training data?

Yes. It’s called a "Model Inversion" attack. If an AI is over-trained on specific sensitive records, an attacker can query it until it reconstructs that data. According to 2025 security benchmarks, unauthorized data reconstruction attempts have increased by 40% year-over-year. Using differential privacy during training is the best way to prevent this.

What exactly is "Shadow AI"?

It’s when your team uses unapproved AI tools for work. For example, an engineer pasting company code into a free personal AI account to debug it. Recent industry reports indicate that approximately 70% of employees admit to using unsanctioned AI tools at least once a week.

Does the EU AI Act actually matter for a US startup?

Absolutely. If you have users in the EU or your AI's results affect people there, you have to comply. For "High-Risk" systems (like hiring or finance), the rules are very strict. Ignoring them can lead to fines of up to €35 million or 7% of total worldwide annual turnover.

Will Pizzano, CISM is Founder of Sentant, a managed security and IT services provider that has helped dozens of companies achieve SOC 2 compliance. If you’re interested in help obtaining SOC 2 compliance, contact us.